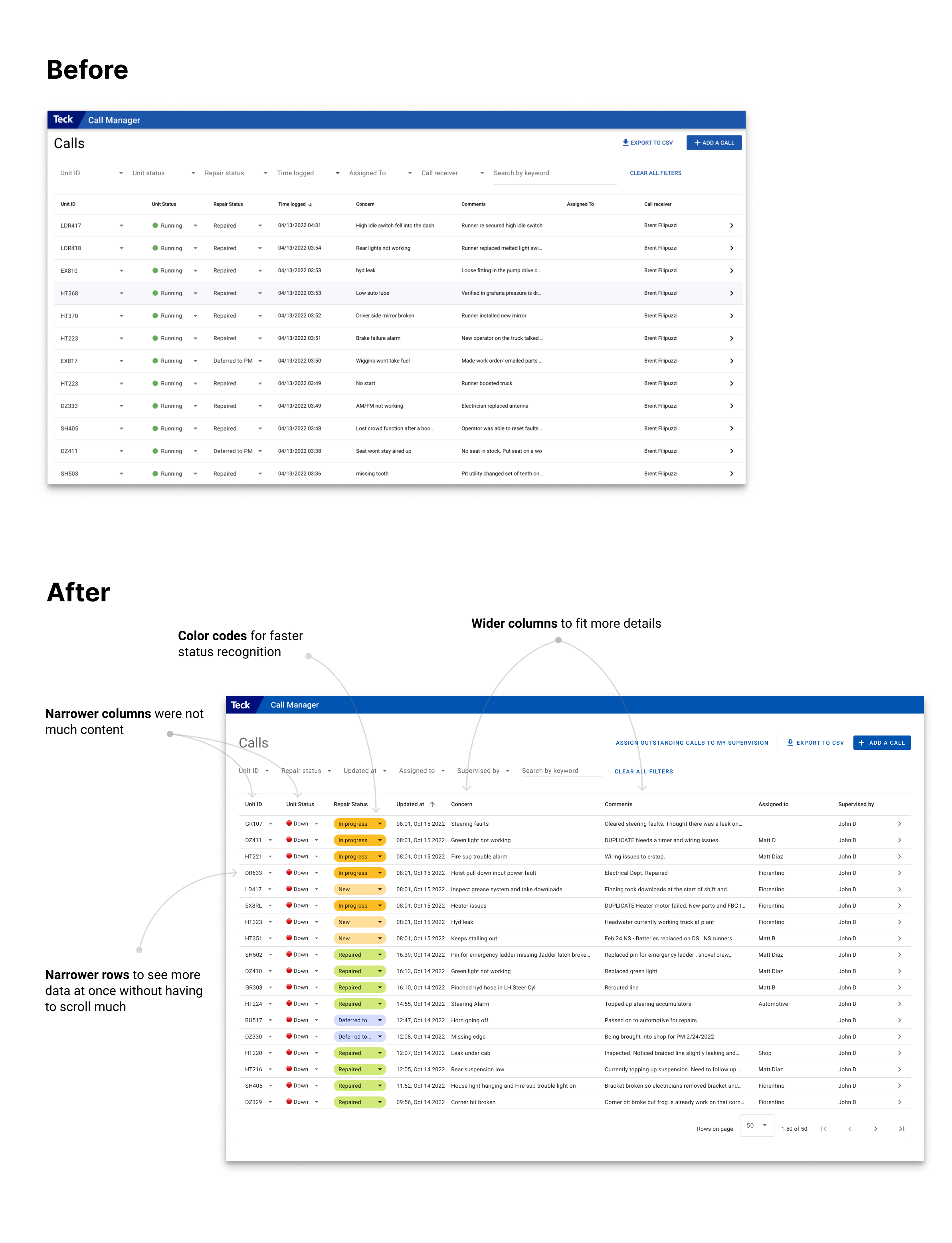

Problem 3: Time Spent Updating Calls

- Supervisors needed to track both outstanding from previous shifts and new issues.

- The app lacked a simple way to group calls needing attention.

- Supervisors had to manually filter and assign calls to themselves (30 calls approx.), which was time-consuming.

Solution

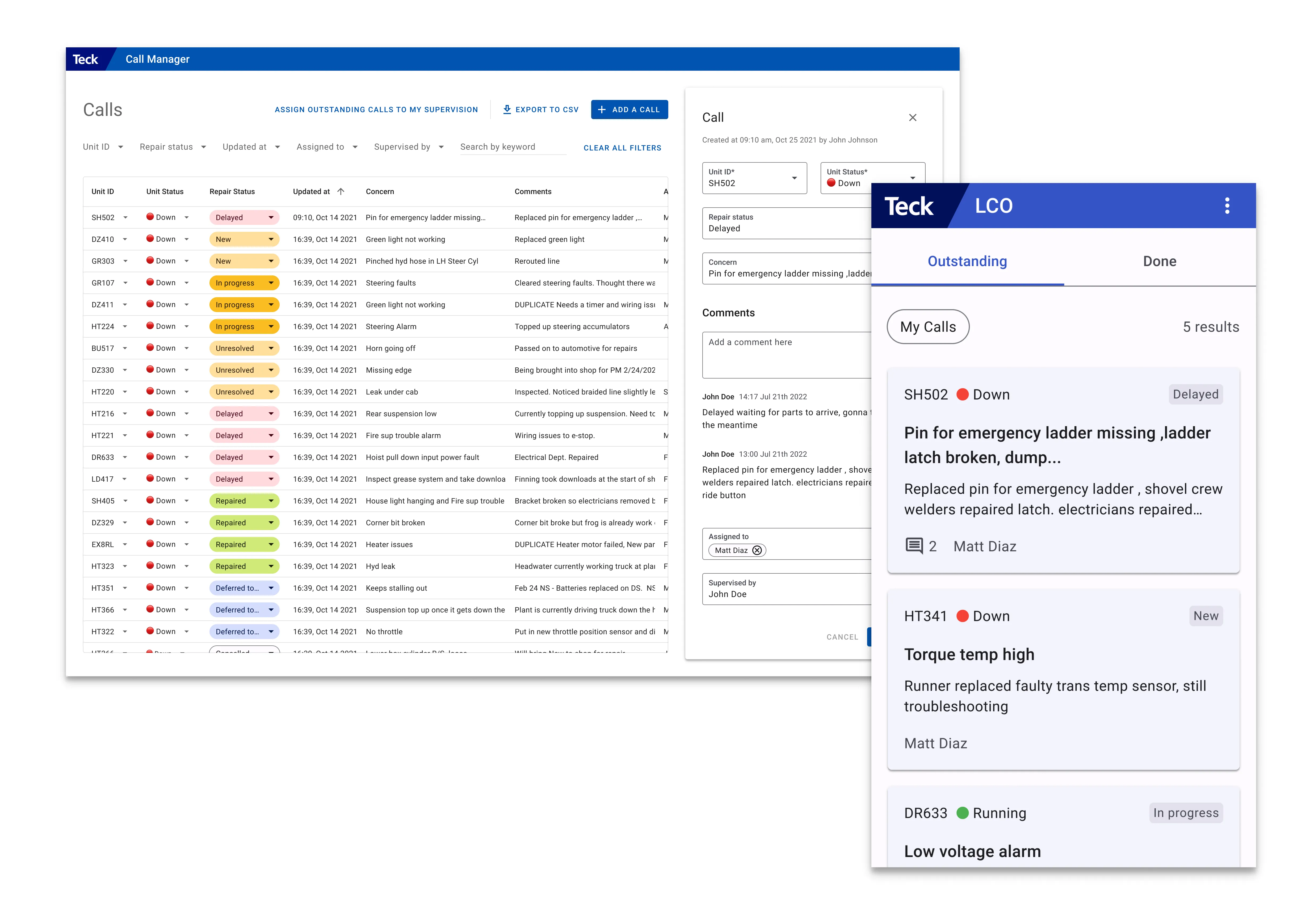

Implemented a button to assign all outstanding calls to a supervisor with one click, saving around 20 minutes per shift.

Results

- 👍 Log Analysis: Updates per call reduced by 70% in the month following the release.

- 👍 Usability testing: The total time saved updating 30 calls every shift was ~10 minutes.

- 👍 User Feedback: Supervisors reported increased efficiency and satisfaction with the new functionality.