Challenges & decisions

Building trust from scratch. The site had worked with our team before but felt abandoned when leadership pulled back. That history made early research conversations difficult, users weren't initially interested in explaining their processes, they wanted to be heard. I adjusted. The first sessions were mostly listening, with occasional deeper questions nobody had asked them before: do you know where this data comes from? has anyone ever trained you on this? Those questions opened things up. Within a couple of sessions one key user was reachable directly on Teams anytime, no customer success intermediary needed. That access changed everything about the quality of research we could do.

Researching from a distance. The site was three hours away and supervisors were busy during shifts. Getting direct observation time was limited. I compensated with deep secondary research, internal documents, established process records, and telemetry data from their existing PowerBI reports. This meant that when I did get face time, conversations were immediately meaningful rather than introductory, which helped sustain the trust built early on.

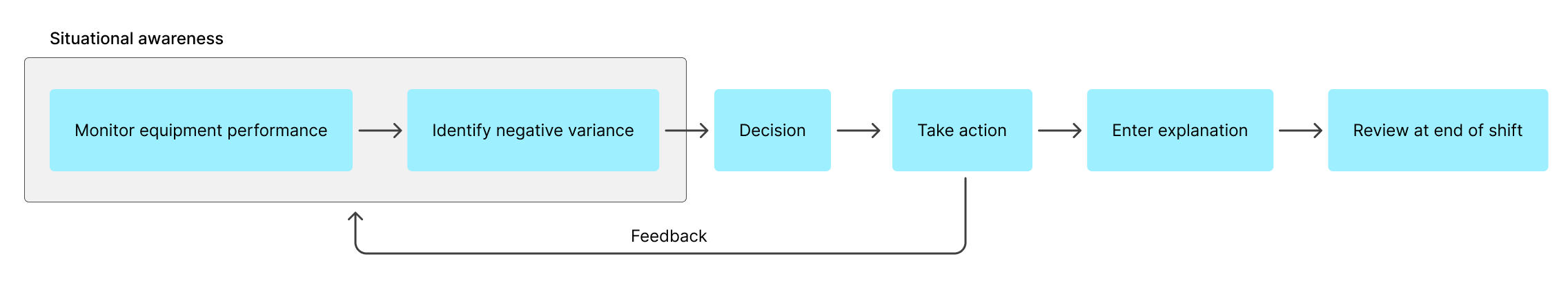

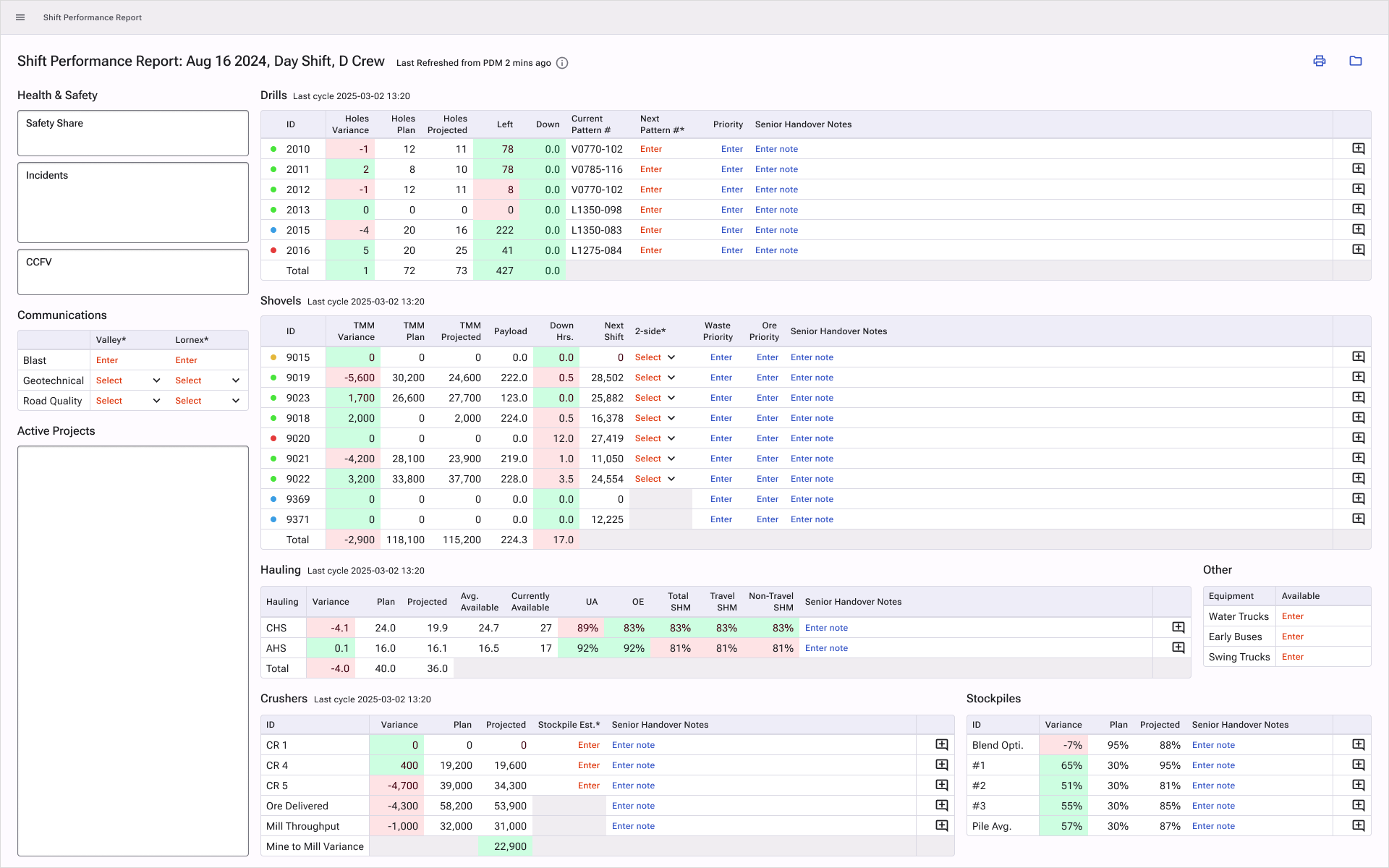

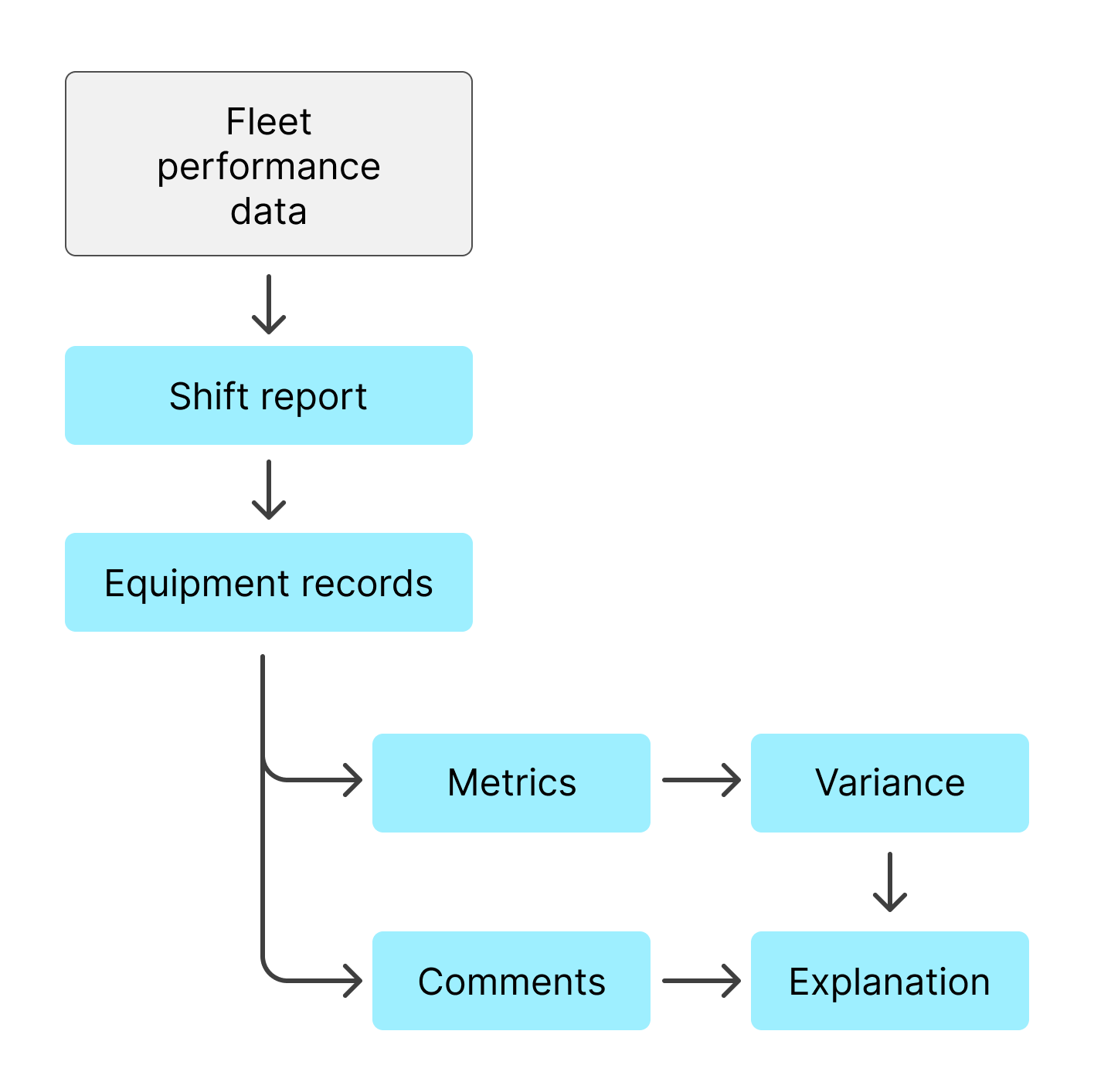

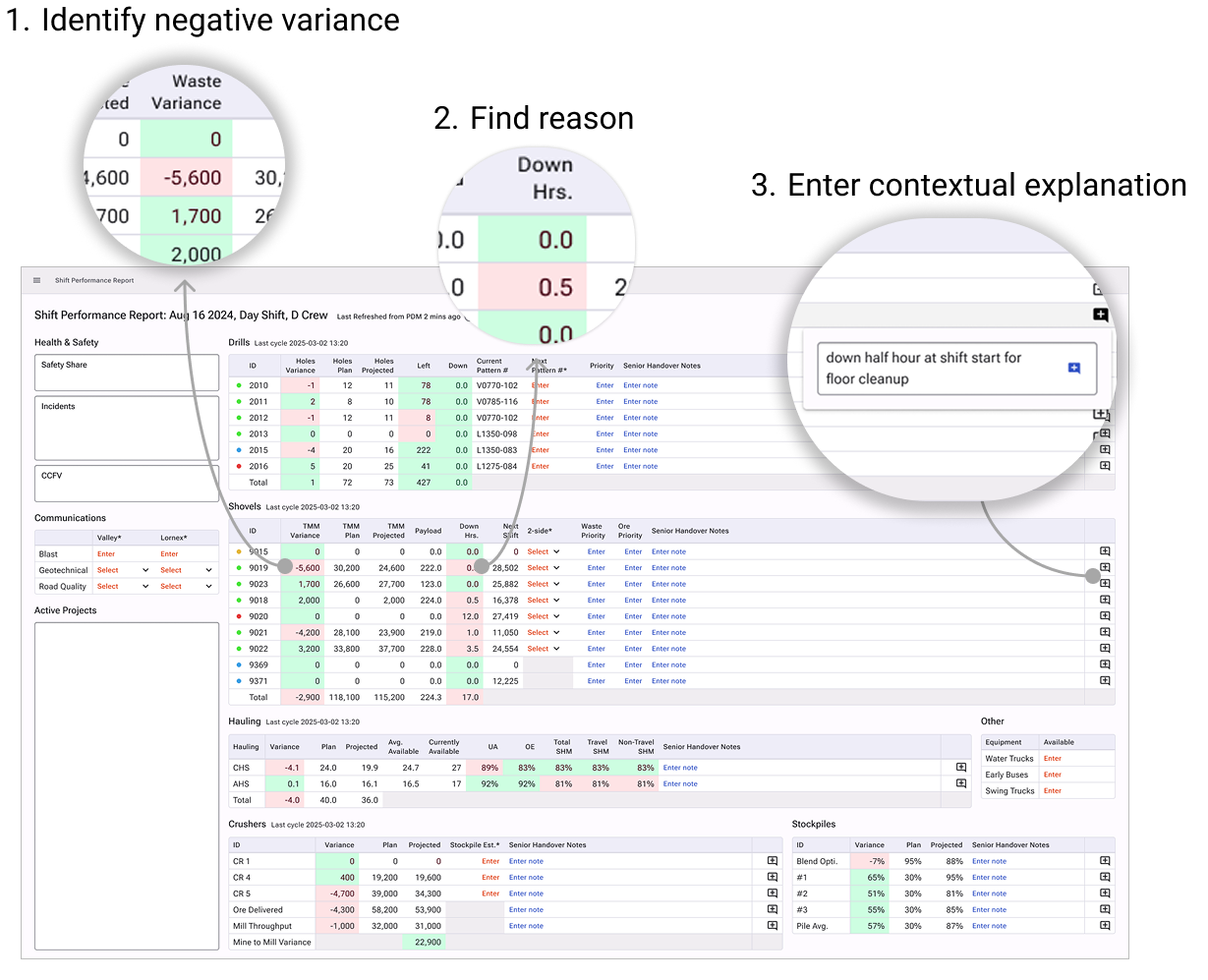

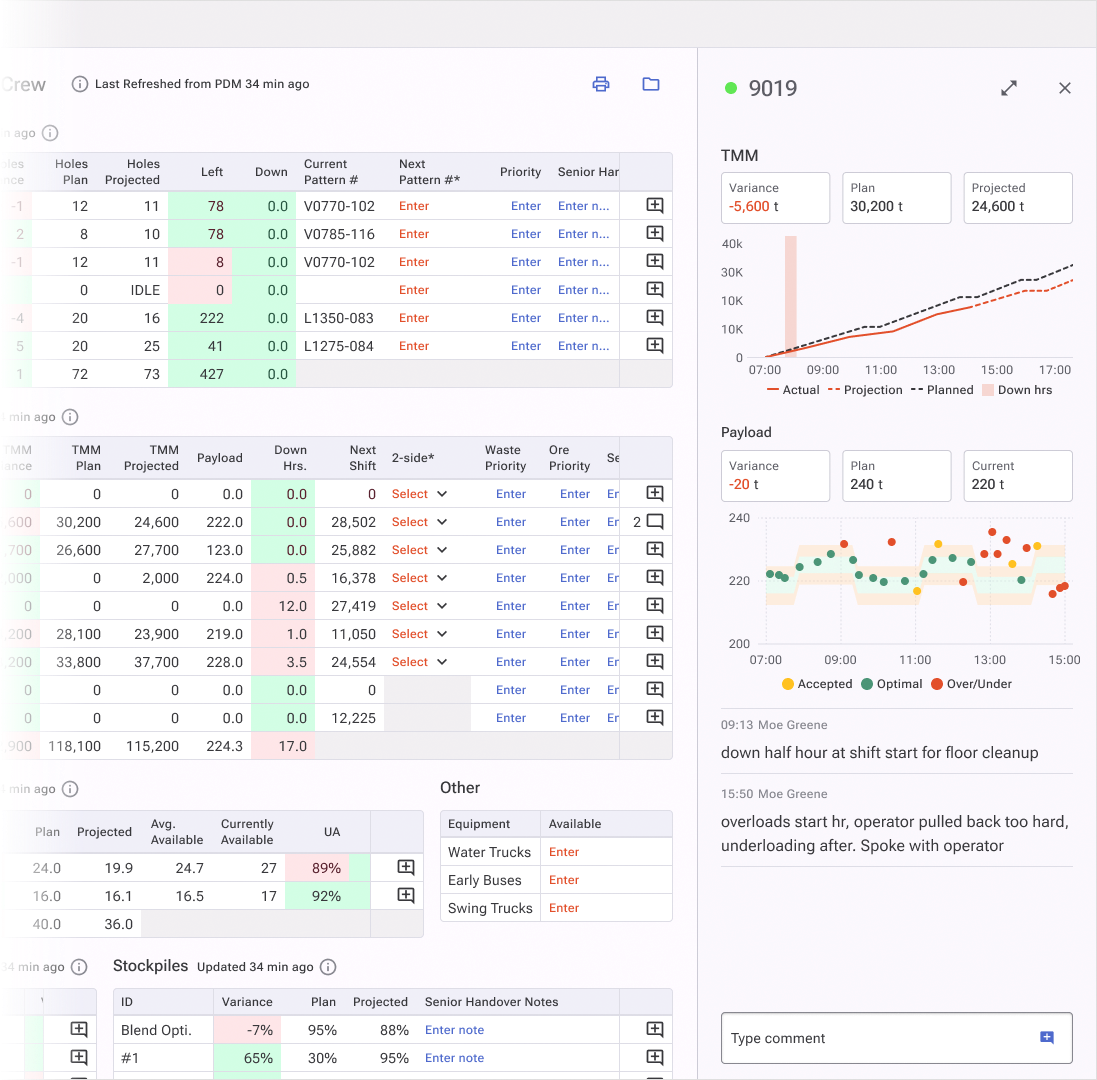

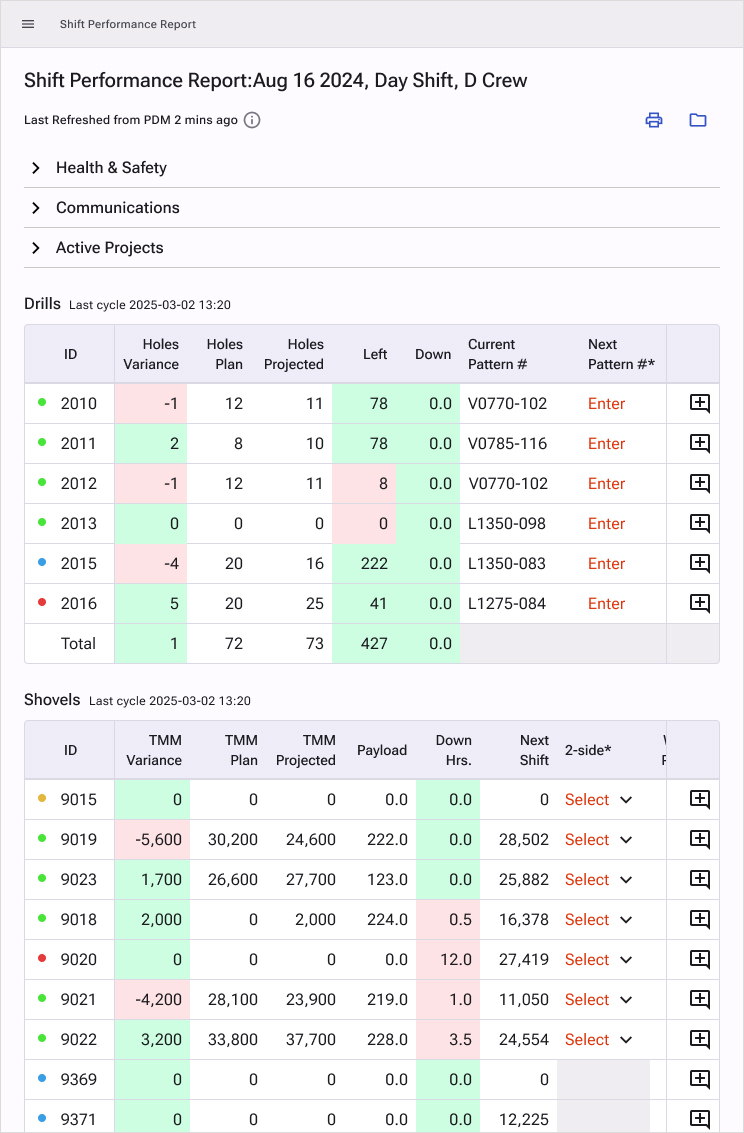

Pushing back on scope. After an early site visit, the team proposed building predictive production recommendations. I'd seen that kind of ambition compress badly under time pressure before. With three months total and a site that was still warming up to us, I argued for starting with the two biggest pain points I'd identified in research: report creation and performance monitoring. I also proposed keeping the design close to what supervisors already knew, to flatten the learning curve and improve adoption odds. The team went with report creation. Results exceeded expectations.

Negotiating the design system. Engineers wanted to use an existing UI framework to save time. I wanted to build something new. Time won, but I negotiated three conditions before agreeing: rebranding was non-negotiable (color and typography), I had approval rights on the framework choice (we landed on MUI for its depth of complex table support), and I retained the right to propose custom components where nothing available met user needs. A small number of low-complexity components were built. The rest was MUI.

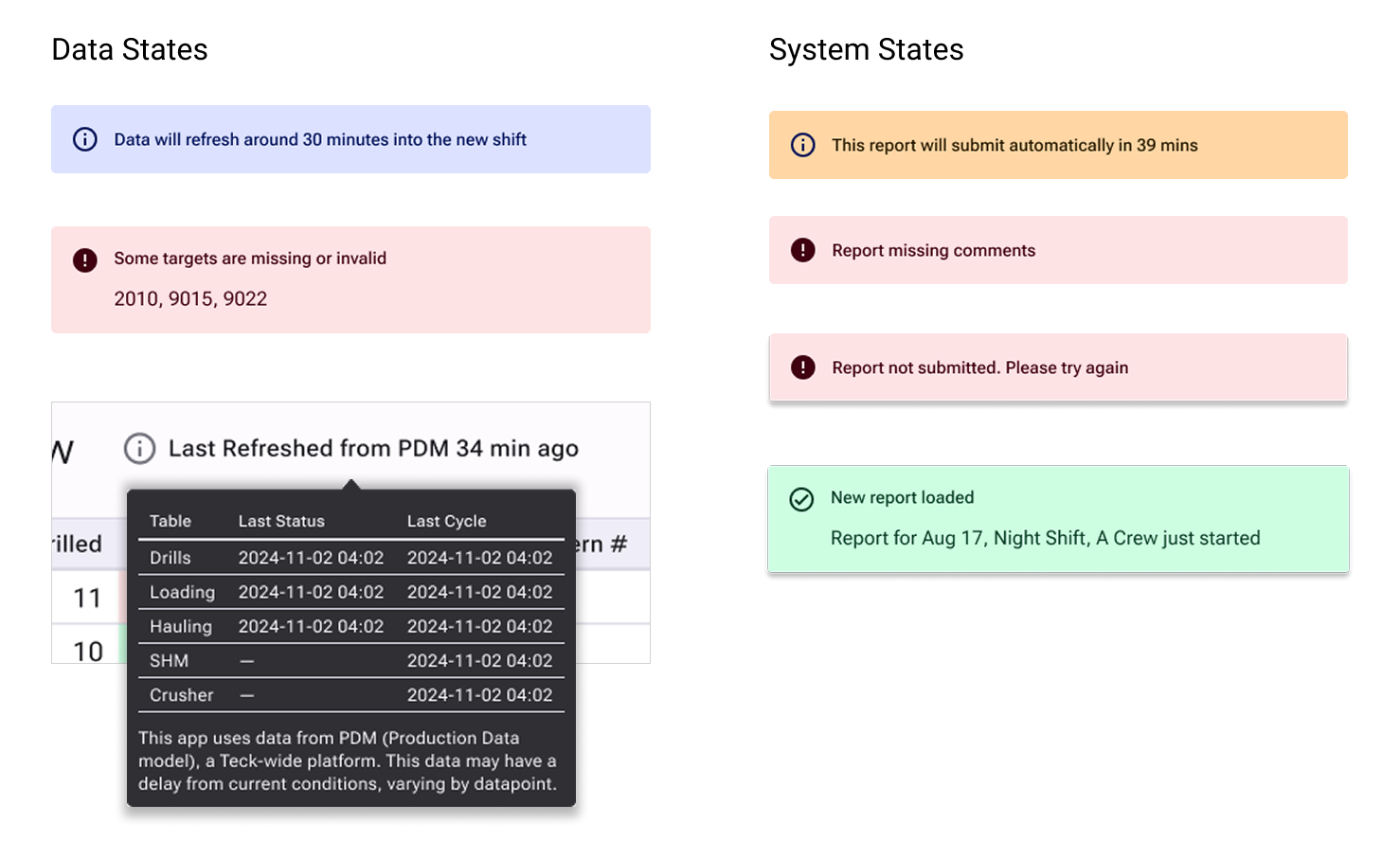

Owning validation when no one else would. There was no plan to monitor app performance post-launch, and the team was reluctant to prioritize it. I took ownership by asking for only a few hours of engineering time, I defined the exact events and metadata to track, then handled all the cleaning, analysis and reporting myself using Databricks and PowerBI. What started as a personal initiative became the source of truth for the whole team: customer success used it for site interactions, leadership used it for monthly performance reviews, and site managers used it to track comment compliance. I owned and maintained both reports, one for compliance visibility across the team, one for deeper event-track analysis for the product team only. Rather than over-engineering, we prioritized structured clarity and adoption first, then used telemetry to guide iteration.